"Models That Respect the Discontinuous Nature of Likert Scales"

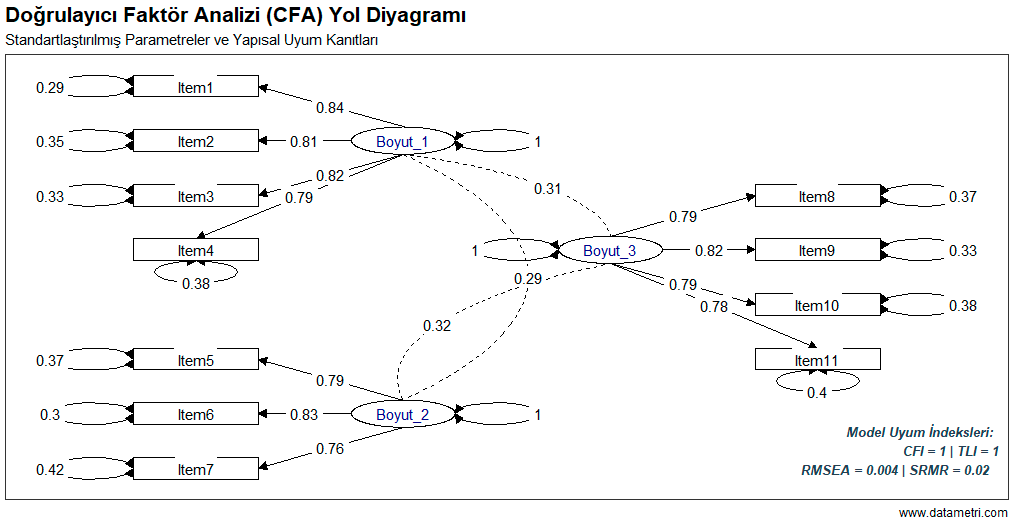

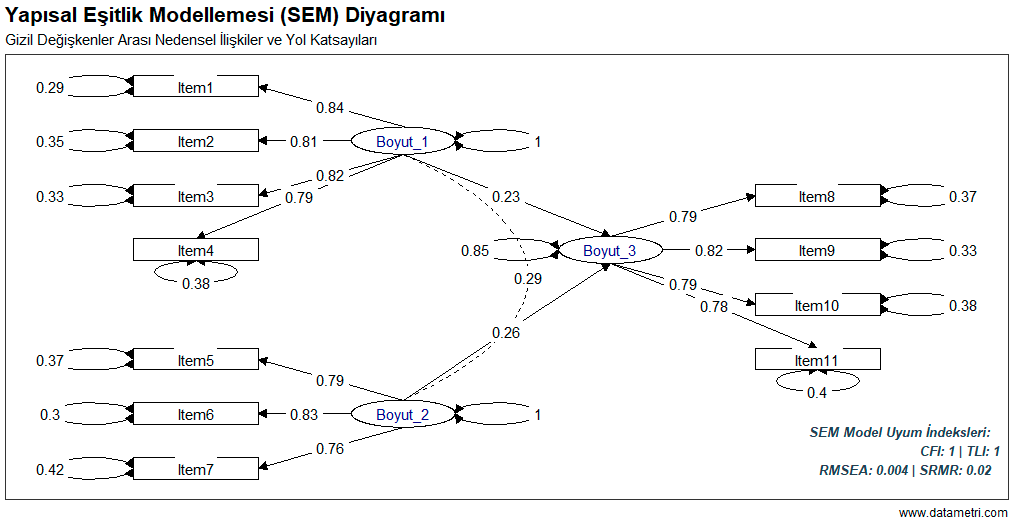

Likert-type scales (1-Strongly Disagree, 5-Strongly Agree) frequently used in social sciences and medicine are by their nature ordinal data structures, not continuous. The traditional Maximum Likelihood (ML) estimator assumes the data is multivariate normally distributed. The use of ML on skewed Likert data where this assumption is violated leads to artificially low factor loadings (attenuation bias).

To eliminate this methodological weakness, we apply the WLSMV (Diagonally Weighted Least Squares) estimator based on the asymptotic variance-covariance matrix and Polychoric Correlations. This approach produces the most accurate parameters by modeling not the items themselves, but the "latent response distribution" underlying those items.

Table: Robust Model Fit Indices for Ordinal Data

| Fit Index |

Value |

95% Confidence Interval / Significance |

Criterion |

| Scaled χ² / df | 39.236 / 41 | p = .549 | p > .05 (Perfect) |

| Robust CFI | 1.000 | - | > .95 |

| Robust RMSEA | 0.002 | 90% CI [0.000, 0.038] | < .05 |

As seen in the table, by stretching the continuous data assumption, the Chi-square (χ²) value attained statistical non-significance (p = .549), capturing a perfect fit between the data and the model.

What Are the Benefits to Your Research?

- Elimination of Type-I and Type-II Errors: Most survey data possesses an asymmetrical nature (ceiling/floor effect). The WLSMV algorithm accepts the data as it is without rebelling against its nature.

- Level of Academic Evidence: Journals in the Q1 segment no longer accept the analysis of ordinal data with Pearson correlations (ML) as valid. This reporting format directly averts rejection based on "Methodological Flaw."