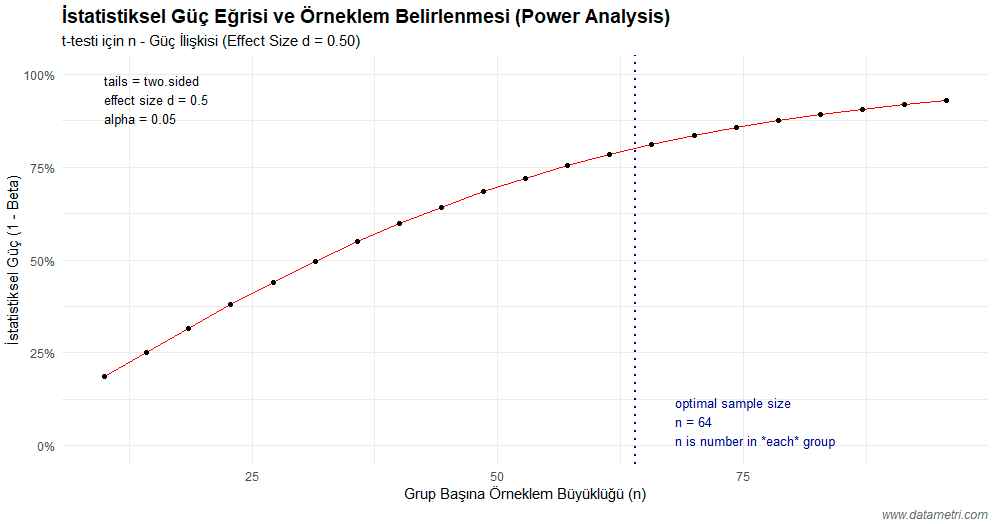

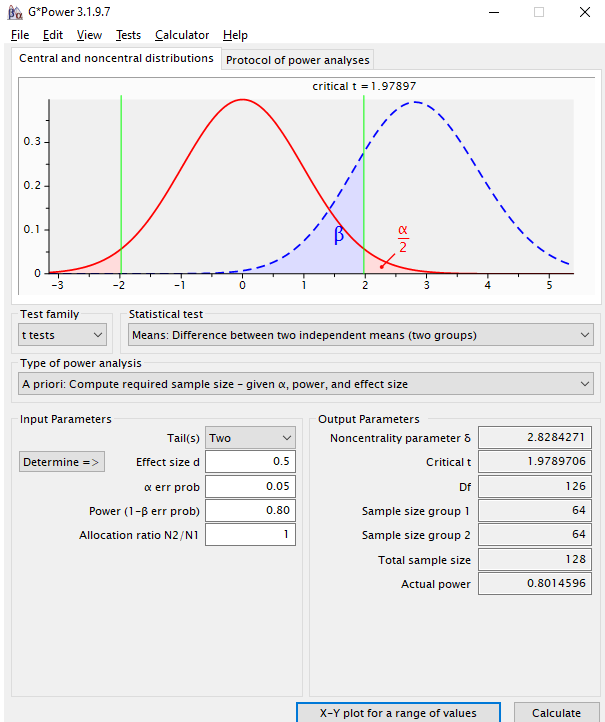

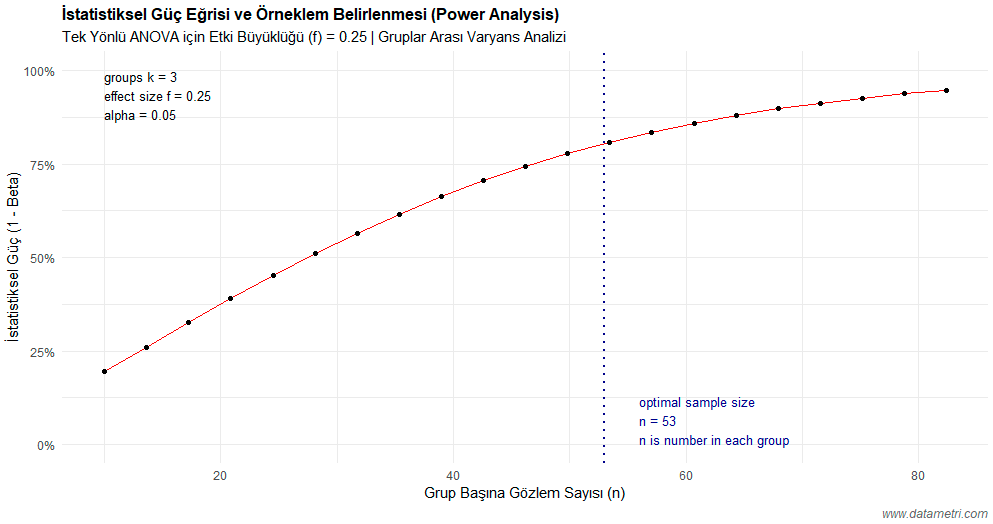

Power analysis parameters (Effect Size, Alpha, Power) vary according to the deterministic structure of the selected statistical test. Incorrect test selection leads to erroneous calculation of the sample size and, consequently, the loss of the scientific (empirical) validity of the research. In the methodological process, the integration of test selection and power analysis is structured according to the following universal standards:

| Research Design | Test to be Selected | Power Analysis Parameter |

|---|---|---|

| Comparison of two independent groups (e.g., Experimental-Control) | Independent Samples t-test | Cohen's d (Effect Size) |

| Comparison of three or more groups (One-way variance) | One-way ANOVA | Cohen's f (Variance Ratio) |

| Linear relationship analysis between continuous variables | Correlation Test | Pearson r (Correlation Coefficient) |

| Independence analysis of categorical/nominal data | Chi-square Test | w or Cramer’s V |

- Cross-Validation Confidence: By combining the flexible simulation power of the R programming language with the deterministic precision of G*Power software, we elevate the scientific authority of your methodological report (methods section) to the highest level.

- Ease of Publication Acceptance and Ethical Approval: We enable you to seamlessly present the "A Priori Power Analysis Report" demanded by peer-reviewers and clinical ethics committees, complete with international APA notations.

- Design Optimization: We provide analytical support not only on the question of "how many people" but also on how you can use the existing limited sample more efficiently through experimental techniques like "blocking" and "randomization."