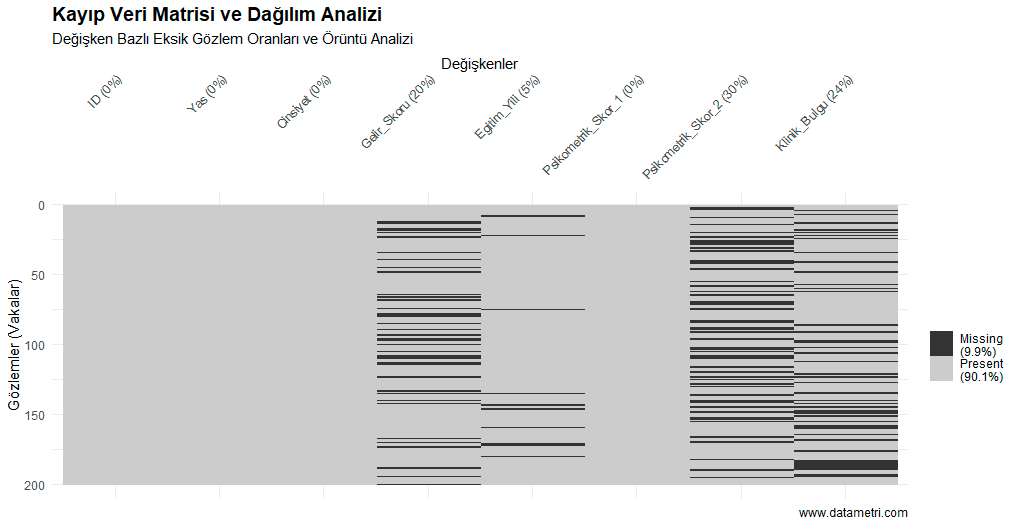

The presence of missing values (observations) in an academic dataset not only reduces the sample size of the study but also carries a severe risk of bias depending on the missing data mechanism. The statistical determination of whether the missingness in the data is random (e.g., via Little's MCAR test) is crucial for determining the multiple imputation strategy to be applied.

- If row-wise deletion (Listwise Deletion) is resorted to, does the remaining "complete-case" set meet the minimum n number required by the statistical power analysis?

- For variables where the missing rate exceeds critical thresholds, which of the variance-preserving algorithms—Multiple Imputation or FIML—should be preferred?

- Are the missing data clustering in a specific income group or demographic stratum, thereby systematically manipulating the results (selection bias)?

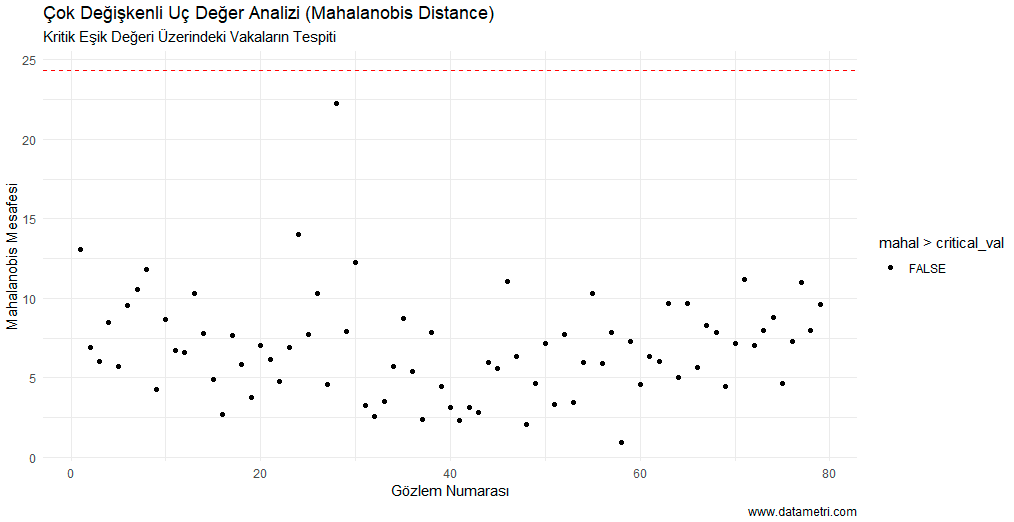

- Prevention of Selection Bias: Missing data management preserves the "representativeness" of the research. We eliminate the criticism of selection bias, which is the most frequent reason for rejection by peer-reviewers in the international academic publishing process, right from the start by scientifically reporting the missing data mechanisms (MCAR, MAR).